- Should you be using React for your next project?

- What about the SEO problems it brings along?

- Can we overcome the website ranking barrier with ReactJS?

Only if someone could come up with proper answers to your questions. We are confident, businesses that want to work with React but cannot due to its SEO challenges share the same feeling. ReactJS is undoubtedly an incredible tool for creating dynamic, feature-rich and powerful websites and applications, but enterprises hesitate to take its side when it comes to search engine optimization.

Well, not anymore. You need answers, and we are all set to offer ready-made SEO friendly solutions for your React driven websites and apps. Also, If you are unfamiliar with SEO mechanisms, do not worry; we have got you covered. We have tried answering the most frequent SEO related queries with this article. So, without any further wait, let’s take a look at what lies ahead:

- Mechanism of SEO.

- How Google ranks your website?

- How SEO Friendly is React?

- SEO problems with React websites and applications.

- Solving the Problem.

- Bonus Tips and Tools.

Mechanism of SEO-

The credit for attracting organic traffic to your website, by all means, goes to Search Engine Optimization. The core objective of SEO is to enhance the quality and quantity of the traffic stream and give you a higher search engine (Google, Yahoo, Bing etc.) ranking.

The maths of search engine ranking is really simple. Google covers approximately 92% of the search engine market share worldwide, which means it is the primary source of online traffic engagement. Out of all the search requests, a significant part of the traffic goes to the first five links that appear on the search result. The more SEO friendly your content is, the higher you rank. This is why enterprises tend to get extremely competitive with their digital marketing tactics when it comes to SEO.

In simple terms, the online conversion rate of any business relies on SEO. So, how do you determine the ranking of your website in the search engine? Let’s find out.

How Google Indexes Your Website?

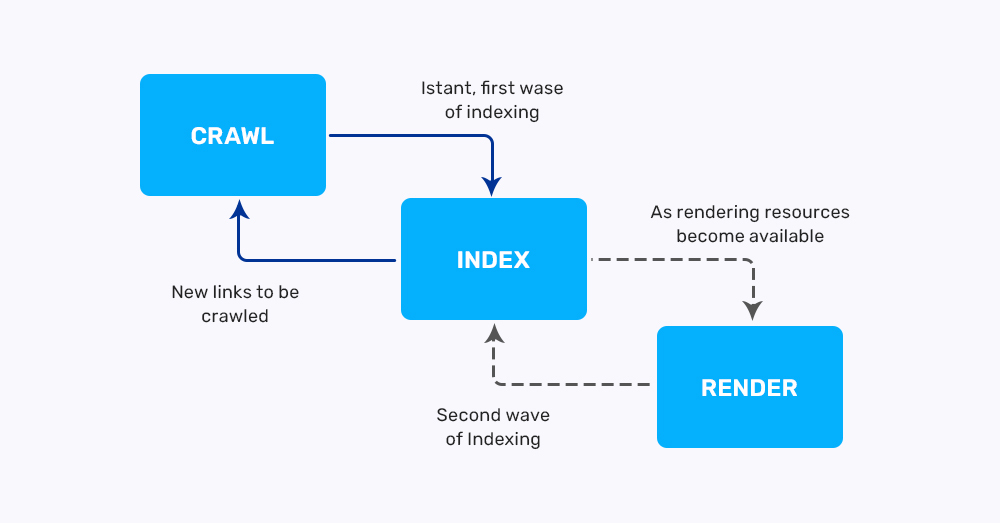

Before we get to SEO-friendly methods, we must understand how the entire process works. So, Google uses a bot (web crawlers) to examine your website or application. These crawlers will load your website, analyze it and then rank it based on a certain number of criteria fulfilled. These criteria could be anything from Meta Tags to URLs, depending on your content.

Below is a short description of how the web crawler analyzes your website/application:

- The web crawler explores the pages link by link and gathers as much relevant information as possible.

- Further, it examines the gathered content on specific metrics such as content uniqueness, website freshness, number of backlinks etc.

- When the crawler checklists all the above factors, it downloads the CSS and HTML files of your React website/application.

- Then all the analyzed data is sent to Google servers. The servers then confirm the content and index it by a system (Caffeine).

- Whatever the system decides is the rank of your website.

This is a fully automated process run by Google bots, so the crawlers need to understand your content precisely. And this is where the problems appear.

How SEO friendly is React?

React is a JavaScript-based framework specialized in creating responsive and powerful SPAs (Single Page Applications) and website UIs. It is easy to build interactive content with React. For instance, it allows you to navigate between pages without reloading, which enhances the user experience. Everything is incredible with the framework, except SEO.

Now, the challenge is that React driven SPAs need JavaScript to exhibit the content on web pages. On the other hand, Web crawlers are not as good at understanding a JavaScript page as they are with any regular HTML code page. For a very long time, Google could not examine content with JS and recommended that it indexes the site with JavaScript being disabled.

Until 2015, the company announced that the web crawlers now would not only inspect the JavaScript pages but will also render them like the other browsers. This was a big relief, but it didn’t exactly solve other SEO issues with React.

SEO Problems with React websites and Applications:

Many SEO professionals believe that web crawlers have a hard time reading and indexing JavaScript pages as compared to other pages.

Indexing without Content-

Generally, Single page applications load pages at the client-side. It takes time for JavaScript to prepare a script and push the content for loading. Meanwhile, SPAs also need a browser to run a script. Only after the script is run, the content is dynamically loaded to the website’s page.

Since JS needs time to load the page, it gets an empty page with unavailable content when a web crawler visits your website. Hence, it indexes React SPAs without content.

Super Delay-

If you are frequently updating the content on your site, chances of web crawlers regularly visiting your site become high. This is again a more significant problem because indexing is a weekly process.

This came as a surprise to us as well when Paul Kinlan (Google Chrome’s developer) informed through his tweet that Reindexing is only possible a week later after the content is updated.

After the crawler downloads the CSS, HTML and JS files, the web render services run the codes from these files, fetch relevant data and send it to Google servers for confirmation, which is why indexing needs at least a week to collect the updated content.

Limited Crawling budget-

Your crawling budget is the maximum number of pages on your website that a crawler can process in the given time. Once it reaches the time limit, the crawler drops the site, irrespective of the pages it was able to analyze (it could be 12, 26 or even 0). So, if each page takes longer to load due to delayed JS script, the bot may simply leave the site without indexing.

If we talk about other search engines like Yahoo, even their crawler might see an empty page.

Besides, with SPAs, “one URL for all” can also be a major issue in React SEO.

So, how do you tackle these challenges? Are there any specific tools for overcoming the SEO barrier? The wait is over; you are now headed to the ready-made SEO friendly solutions for your React-based applications and websites.

Solving the SEO Problems:

There are a couple of experts tried and tested methods that can work for such SEO challenges in your React applications and websites.

Isomorphic React Applications-

An isomorphic React application runs on both the server-side and client-side. The best part is that it automatically determines if the JavaScript is disabled or enabled on the client-side. We discussed above that SPAs require browsers to run a script in order to load content for the pages. With isomorphic JavaScript, you can simply render the HTML file and serve it to the user without any help from the browser. You see where we are getting with this?

You will save yourself from the delay of content loading.

Now there are two possibilities when crawlers analyze isomorphic React applications:

- If the JavaScript is disabled, the code is rendered on the server, and the crawler gathers all the relevant data (metatags, content) in CSS and HTML files.

- If the JavaScript is enabled, only the first page is rendered on the server so that the crawler takes relevant data. Rest all the pages are loaded dynamically through isomorphic react apps.

This makes indexing even faster. So you see, isomorphic apps give you the best of both worlds.

This brings us to another issue that developing isomorphic react applications is time-consuming. Luckily, we have two frameworks that may help you fasten the development process. You can consider using Next.js and Gatsby.js for efficient SEO.

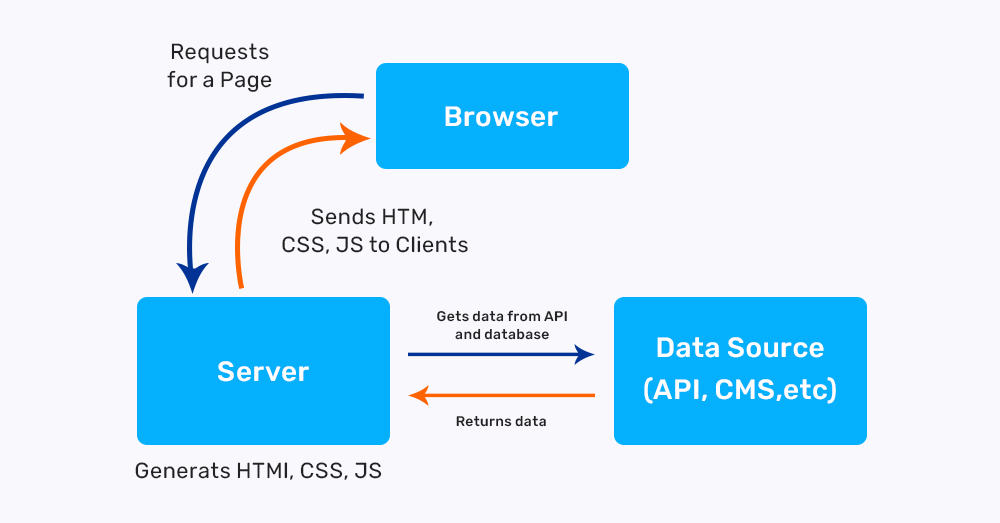

Next.js-

This framework lets you develop isomorphic React applications that can be generated on the server-side. It comes along with features like hot reloading, code splitting and full-fledged server-side rendering.

Generating on the server-side means your HTML content can be rendered quickly every time you receive a request.

Here is how Next.js works with SEO rendering:

- The Next.js server takes the request and examines the content on the React page, keeping the URL in check.

- Your web page then sends the data from the API to the Next.js server.

- The crawler takes HTML and CSS files from the content received.

- All the files are shared with the server within a short time period.

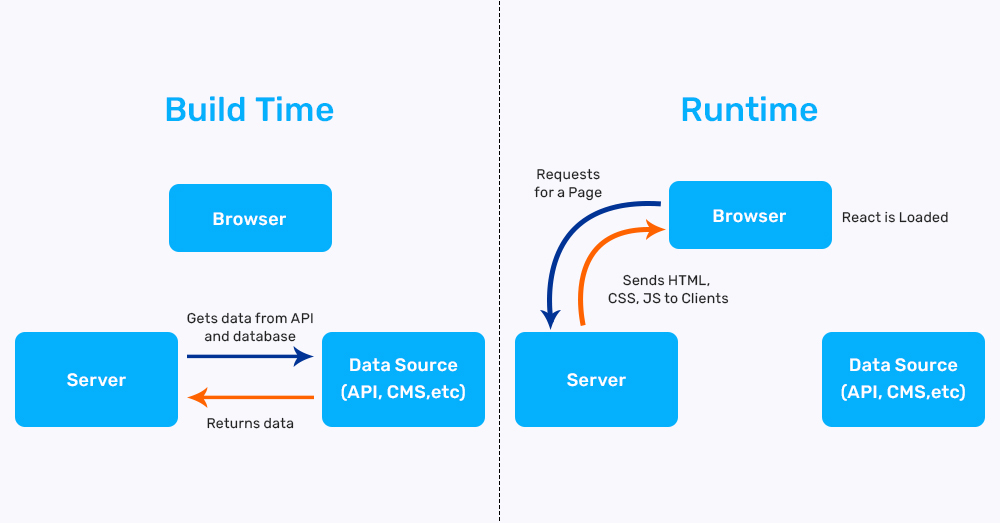

Gatsby.js-

Gatsby.js is an open-source compiler that helps in developing robust and responsive websites. Although it does not play any role in server-side rendering, it will save your website’s HTML content on its cloud or non-hosting platform.

Here’s how Gtasby.js works with SEO rendering;

- The Gatsby bundling tools will gather data from the APIs, files or CMS.

- While deploying the data, these tools will generate CSS and HTML files with the help of React components in your application/website.

- After the compilation, an index.html file is created, which is stored in Gatsby cloud or its non-hosting platform.

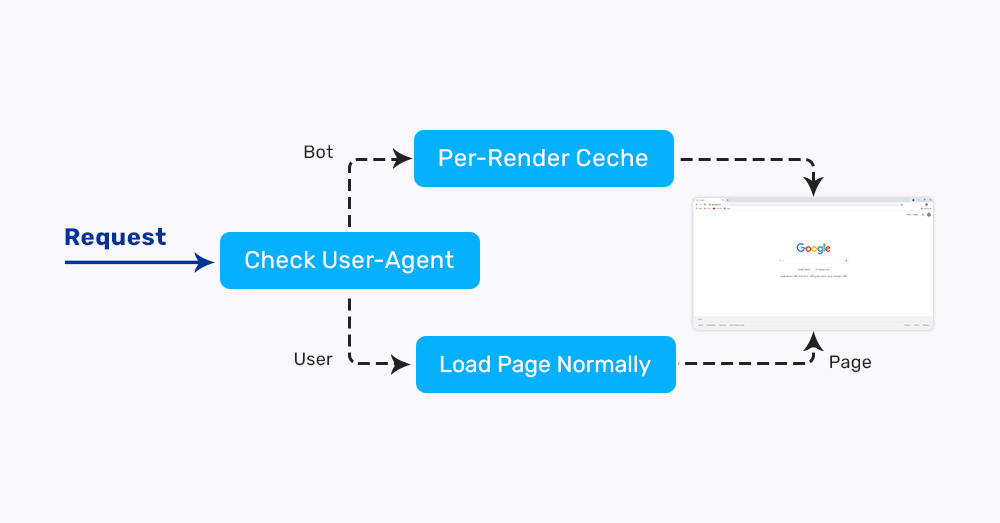

Pre-rendering-

Although Isomorphic React applications are the best approach to making your React SEO friendly, you can try other options. You can pre-render your website using a service like “Prerender”, which renders your website using Headless Chrome.

Prerender will wait for your pages to load fully and then gather the content in the form of HTML files. This approach brings you certain benefits like:

- Your website is crawled correctly by the Search engines.

- You do not need a codebase to set up prerendering on your website.

- Since it’s a simple rendering service engine, it does not put a lot of pressure on the server.

Apart from these SEO solutions, you can also try some tools to increase the optimization. Tools like React Routerv4 and React Helmet may help you up to a certain level with SEO.

Final Thoughts

Due to the component-based architecture, React is an ideal platform for creating interactive SPAs and websites. Tech giants like Google, Twitter and Facebook rely on React without a shadow of a doubt. We would not want you to avoid using React merely because of the SEO challenges. Therefore, we hope this article helps you in overcoming the SEO barrier. We would suggest you hire React JS Developers Or programmers to give you a smoother run for more customized insights.